![Implementing Microsoft Exact Data Match (EDM) Part 2]()

When we last left our superheroes, they were on a mission to configure EDM to help protect the world! Change a few small things in that last sentence and it sums up what happened in Part 1 of this blog series. We learned how EDM can greatly assist in ensuring that the data being uploaded to the cloud such as PII and PHI will be properly discovered and protected in the DLP process.

The next step in our EDM setup is to create a Rule Package XML. This is probably the most crucial step in setting up EDM. The Rule Pack controls or sets the criteria for how a match is made. I am going to walk you through the setup of the rule pack and explain the criteria and its use.

The first thing we need to do prior to even creating the rule pack is to configure a custom Sensitive Information Type that will define the SRN, you remember, the Superhero Registration number. In our CSV file you can see that the SRN is a 5-digit number. This makes this pretty easy to set this up. We will use regex to define this as “d{5}”.

To create this new custom sensitive info type I used the Compliance Center (compliance.microsoft.com)

- Login to the compliance center, select “Data classification” from the menu on the left, then select “Sensitive info types” and then “+Create info type”, choose a name and description for the type and click Next

- On the next screen click on “+ Add an element”

- Select “Regular expression” from the “Detect content containing” drop down box, enter the regex “d{5}” leave all the other options at the defaults and click Next

- Review the settings and click Finish

- Click Yes to Test the created sensitive type

- If the test does not automatically show up, find the newly created sensitive info type and click on it, then click “Test type”

- Either click and drag or browse to your CSV file and then click Test

- You should then get the results (In my case my file has 21 records and all 21 SRN were found, click Finish

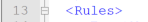

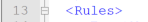

Now that we have the base custom sensitive info type created, we can move along to the rule pack file. Highly recommend starting with the sample rule pack from the documentation. In the example rule pack you will see on line 2 the line: <RulePack id=”fd098e03-1796-41a5-8ab6-198c93c62b11″> You will need to replace this GUID with a new GUID, use the New-GUID PowerShell cmdlet to do this.

Replace the existing GUID with the new one you just created. Now on line 4 of the sample rule pack file you will see another GUID, this one associated with the Publisher ID. Again use the New-GUID cmdlet to generate a new GUID for this and then replace the existing GUID with the new one. Lines 5 and 6 deal with the language localization, they are set for English, if you need to change go ahead and make the changes. Lines 7,8 and 9 deal with the publisher name and rulepack name and description, fill in whatever you would like. Here is my first 12 lines of the rule pack after making the changes from the sample file:

Ok now is where the work comes in and deciding what you want to consider an exact match. I am going to go line by line here and explain the attributes and options you have to configure this. Line 13 just starts the Rules section:

Line 14: notice another GUID found after “ExactMatch ID, you need to replace this using the new-GUID cmdlet again. The next attribute is “PatternsProximity”, this is currently set to 300. Pattern Proximity tells the service how many characters to look for (before and after) additional corroborative evidence, like first name and last name, is to the IDMatch, which in our case in the Superhero Registration Number, or SRN. More information about the PatternsProximity Attribute can be found here. The below was taken from that link:

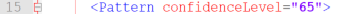

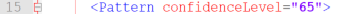

I am keeping the PatternsProximity at 300 for my rule pack, you can change if you like. The next attribute is “DataStore”. This needs to match the Datastore named entered in the Schema file. As you remember it is names SIPAIdentities. Last attribute on line 14 is “recommendedConfidence” and is set at 65 in the sample file. Confidence level is the confidence that the data identified is actual and confirmed to be what is being looked for, the high the confidence level, the better the match. This attributed is a suggestion base confidence level. I am going to keep this at 65 and will explain more about confidence levels in the next few lines.

Line 15, this only has 1 attribute, “Pattern ConfidenceLevel” and is set as the same of the “recommendedConfidence” attribute on the previous line. This Confidence level is different, what this is setting is the Confidence level for the next line, just matching on the SRN (keep reading).

Line 16, this line finally sets up what is being looked for. This is a direct correlation to the Schema file and which fields we set as Searchable. The first field we will setup is the SRN field. Also on this line we will utilize the custom sensitive info type “Superhero-Registration-Number(SRN)”. This sets the SRN field to use this type and regex we setup. The first attribute is “IdMatch matches” and we will replace SSN with our SRN field. The “classification” attribute is where we will use the name of the custom type created, “Superhero-Registration-Number(SRN)”. You can use the full name or you could use the GUID of the custom type, you would need to use PowerShell to get the GUID.

Line 17 is just closing off the pattern match for SRN alone, no changes needed

Line 18 is now where we begin to look for supporting data from the datastore that indicates the SRN number found is more than just a random 5-digit number. When we add criteria to find additional data, within the Proximity of 300 characters, we do this. We want to see is the Superhero’s first name, last name, nickname or home location is located on the document or email being scanned. This is really where confidence levels really come in.

Let’s say Mike, one of the HR reps for SIRA, has a document for all of the superhero’s benefits. What would happen if this document is sent out to external people, like maybe someone who is a member of the Legion of Doom, that would be a serious issue. When we create a DLP Policy (this will be coming later on) we want to know if the data is sent out, what is the level of confidence that the data can identify a superhero’s secret identity. We can set the criteria for the Confidence levels and line 18 starts this.

I am going to keep Line 18 at 75 for the confidence level, this line also starts a new pattern lookup.

Line 19, what are we looking (Searching for), again this is the SRN number as the classification of the custom sensitive info type we create earlier. Basically this line is duplicate with line 16.

Line 20-26, in these lines we are setting the criteria for the additional matches we are looking for in relationship to SRN within the Proximity limit we set in line 14. The sample file is stating it is looking for at least 3 matches of the following 6 lines in the XML file. The maxMatches attribute is really not needed here, because it is set at 100, it is over the eligible limit of six fields to search for. If this was the only condition we were using, we could omit maxMatches.

Note – Find additional information about mimMatches and maxMatches here.

I will be using maxMatches for our SuperHeros and will explain how. Using min and max matches allows for tiering of the confidence level. I can set the criteria for 75% confidence match to include 2 of 4 remaining fields (Firstname, Lastname, Nickname and Home) and then I can set the criteria for 85% confidence to match 3 of 4, and then 95% confidence if 4 out of 4 are found. One thing to note, is for all of these, the system first must find the SRN and let find the other fields within the 300-character proximity limit.

Here is what the updated lines look like (line 25 & 26 are just XML ending that match pattern section)

Now I am going to add some lines for the 85% and 95% matches. They will look almost identical to lines 20-26, the only changes will be the confidence level and the min and max matches attributes. Here are all three patterns, again notice only thing that changes between them is the confidence level and min and max match attributes. The reason I am adding in different

Now let’s add in the other searchable field from our Schema file, the Nickname field. You will notice we used the Nickname field in the above examples, nothing wrong with this. But now we are going to key on the Nickname field first and then look for additional fields to corroborate the data.

Note: Just like the SRN searchable field, I must create a custom sensitive info type to set the classification for the Nickname field. I first tried to create a Regex to look for one word or it could be two. I thought I had created the correct Regex, but it did not work in actual testing. I shifted and decided to move to a Dictionary file. The reason I went with a dictionary file versus using Keywords is that Keywords are used for just a couple words and has a 50-character limit where dictionary file can contain upwards of 100,000 terms per dictionary.

- To create the new custom sensitive info type, select Create Info Type from Data ClassificationSensitive Info Types, give a name and description and click Next

- Select Add element and then select the Dictionary (Large keywords) and click on add a dictionary

- Click on Create new keyword dictionaries

- Give the dictionary a name and then add the nicknames, each on a separate line and click Save

- Select the newly created keyword dictionary and click Add

- Click Next

- Review the information and click Finish

I am going to make this very similar to the SRN field we just completed, will need to ensure a new GUID is created for the ExactMatch ID, take a look:

Ok, now we have the criteria set, all we need to do is name the new Sensitive Info Policies in the last section of the rule pack file, LocalizedStrings.

In this section there is only one entry in the sample file, for our file we will need two, one for SRN and one for Nickname. These are straight forward and simply set the language, name and description for the sensitivity info type that we just configured. The most important thing to be aware of is that you need to copy the GUID created for the configuration to the localization section. See below, I copied the two GUIDs and then created the naming entries.

Here is a link to the entire rulepack.xml file, I encourage you to only use this as a reference and not just copy and paste for your rule pack file. Putting this together is very informative and helps you learn the system.

Now that the rule pack is done, we need to upload to the service. To upload we need to connect to Remote PowerShell again just like we did for the Schema file. Here are the instructions for connecting to the Office 365 Security and Compliance center using PowerShell when you have Multi-factor auth.

Once connected, issue the below commands (make sure you are in the directory that has the rulepack.xml file in it.

$rulepack=Get-Content .rulepack.xml -Encoding Byte -ReadCount 0

New-DlpSensitiveInformationTypeRulePackage -FileData $rulepack

Next item on the agenda is to index and upload the sensitive data. To do this you will need to download the EDM Upload Agent that is available in step 1 of the previous link. When you go to the link to get the download, pay attention to the setup needed for a security group. You will need to create an Office 365 Security group; you can do this from the Microsoft Admin portal or the Azure AD Admin portal. Create the group and name it EDM_DataUploaders. Add the user account to this group that you have been using for the project.

Download and install the EDM Upload Agent, ensure you are a member of the newly created group and that you are a local admin on the machine you will be uploading from.

- Start a command prompt and run it as an administrator.

- Change the directory to the EDM Upload Agent directory, C:Program FilesMicrosoftEdmUploadAgent.

- First step, and only needed once, is to authorize the EDM Upload Agent to the proper tenant. To do this run the following command and then login with your Tenant credentials, the one that you just added to the Security group created, EdmUploadAgent.exe /Authorize

- Next step is to index and upload the csv file. Here is the syntax for the command, EdmUploadAgent.exe /UploadData /DataStoreName <DataStoreName> /DataFile <DataFilePath> /HashLocation <HashedFileLocation> for me, here is what the command looks like: (be sure to pre-create the Hash folder)

EdmUploadAgent.exe /UploadData /DataStoreName SIPAIdentities /DataFile C:ScriptsEDMSuperheros-CSV.csv /HashLocation C:ScriptsEDMHash

- Depending on the size of the source file, it might take some time to upload. To check status, you can use the other commands available via the tool, just type EDMUploadAgent.exe to get a list of the commands. Use the /GetSession switch to check on the status.

My file was very small, only 22 rows so it took no time at all. Currently the service supports up to 10 million rows with 5 searchable fields. Microsoft is working on increasing both limits.

Note: You can split up very large datastores into multiple smaller datastores. The benefit of this is you could use a data source that is larger than the current limits. The downside is you will need to configure a separate Schema file for each datastore as well as a rule pack for each one. You will also end up with multiple sensitive info types for each datastore and then will need to ensure that your DLP policies are referencing all of the sensitive info types for each datastore. You can have one DLP policy, but it will need to look for SRN_DS1, SRN_DS2, SRN_DS3 and so on.

This completes part 2 of the series. While we only really worked on the Rule Pack in this part, I hope you understand how important it is to your entire EDM solution. Next up in Part 3 we will dive into creating DLP policies and doing some testing!

![Implementing Microsoft Exact Data Match (EDM) Part 2]()

On behalf of the entire Microsoft 365 community, I am incredibly excited to announce the general availability of our Records Management solution to help meet your legal, business and regulatory recordkeeping obligations.

As remote work becomes the new normal, securing and governing your company’s most critical data is of paramount importance. Records Management provides you with greater depth in protecting and governing critical data. With Records Management, you can:

- Classify, retain, review, dispose, and manage content without compromising productivity or data security

- Leverage machine learning capabilities to identify and classify regulatory, legal, and business critical records at scale

- Demonstrate compliance with regulations through defensible audit trails and proof of destruction

We look forward to hearing from all of you on how Records Management is helping you meet your compliance needs.

Get started

- Eligible Microsoft 365 E5 customers can start using Records Management in the Compliance Center or learn how to try or buy a Microsoft 365 subscription.

- Read this blog by Alym Rayani to learn about additional details of Records Management capabilities.

- Learn more about Records Management in our documentation.

- Attend an upcoming technical webinar:

EMEA: May 26, 2020 16:00 GMT (Calendar invite, Attendee link)

North America: May 26, 2020 12:00 PST (Calendar invite, Attendee link)

FAQ on Records Management

- Where can I access Microsoft 365 Records Management?

- In the Microsoft 365 compliance center, go to Solutions > Records management

- Before my organization can use Records Management, does every user in the tenant need a license that entitles them to use it?

- Why can I see the Records Management solution if I’m not licensed for it?

- At this time any customer will be able to see the solution even if you’re not licensed for it, although not all functionality will work as expected. In the near future, this will change, and you won’t be able to see records management options if you’re not appropriately licensed.

- How is this solution different from Information Governance?

- While our Information Governance solution focuses on providing a simple way to keep the data you want and delete what you don’t, the Records Management solution is geared towards meeting the record-keeping requirements of your business policies and external regulations. This will be the only location where you can create record labels or take advantage of some of our more advanced processes such as disposition review.

- How is this solution different from SharePoint’s in-place records management or records center?

- This solution is our next evolution in providing Microsoft 365 customers with records management scenarios. It uses a different underlying technology than our legacy functionality in SharePoint, and also goes across Microsoft 365 beyond just SharePoint.

- This new solution is where our future investments in records management will be made and we recommend any SharePoint Online customers using SharePoint’s in-place records management, content organizer, or the SharePoint records center to evaluate migrating to this new way of managing your records.

- What does declaring something as a record do?

- Our online documentation provides detailed description of the enforcements we add to an item that has been declared as a record. At a high-level, declaring content to be a record prevents any edits to that item and it will be preserved for the period of time you specify.

- Is Records Management supported in Teams?

- Yes, users can share, co-author (if unlocked), and access records in Teams through the app, web or mobile. Thanks to the integration with SharePoint document library views, you also can add the retention label to the default view of the Teams document library and see which retention label is applied to each file. To declare files as records or edit retention labels from Teams – you must do this in SharePoint by clicking “Open in SharePoint” from the “Files” tab. We plan to support more records management capabilities in Teams and we will share more details as we get closer to the date through the public roadmap.

- Can I manage all record and non-record labels, policies and process in records management?

- Yes. Records management in the Microsoft 365 compliance center is the place to manage your complete retention schedules and processes, even for content that you don’t declare as records.

- Where can I learn more about records management?

- Where can I submit feedback or request new features?

- Do you have additional features coming to this solution?

![Implementing Microsoft Exact Data Match (EDM) Part 2]()

v:* {behavior:url(#default#VML);}

o:* {behavior:url(#default#VML);}

w:* {behavior:url(#default#VML);}

.shape {behavior:url(#default#VML);}

Sean McNeill

Sean McNeill

4

15

2020-04-28T18:13:00Z

2020-04-28T18:19:00Z

1

1381

7876

65

18

9239

16.00

true

2020-04-14T16:07:43Z

Standard

Internal

72f988bf-86f1-41af-91ab-2d7cd011db47

4f103f04-8a9d-4303-aed2-00001b14788b

0

0x01010027F5766E132DEC4FAC818783CD4A0767

Microsoft launched the Exact Data Match (EDM) in August of 2019. This new capability enhances an organization’s ability to identify and accurately target specific data. EDM goes beyond just checking for data that matches patterns, it creates a datastore or dictionary of actual corporate data like employee information or customer specific information to ensure the data is not sent via email or shared out to external users.

EDM can help reduce probably one of the biggest issues with Data Loss Prevention (DLP) – false positives. A false positive for DLP is when data is treated as Sensitive to the company, but really is not. Microsoft has over 99 built-in sensitive information types, but most of these types rely on pattern matching using regular expressions (regex) sequences that define a search pattern. Even pattern matching with regex is hard to define. Let’s look at Social Security Number (SSN).

An SSN is a 9-digit number that is assigned to each worker within the United States. The SSN is used to identify and track a person’s wages or self-employment earnings and is then used to monitor your Social Security Benefits when they begin. With everyone having an SSN it would seem very easy to define what it is – a 9-digit number. However, an SSN is pretty hard to identify. There are many ways people write out their SSNs, but the most common ones are the following: 123456789 or 123-45-6789 or 123 45 6789.Prior to 2011 there was a strong formatting that set certain parts of the number mush fall within specific ranges. SSNs issued after 2011 do not have the strong formatting.Many ways to identify an SSN is by looking for the three ways the SSN could be formatted as well as including keywords, like SSN, Social Security, Soc Sec, SSN#, etc.

Above you can see the built-in SSN Sensitive Information Type. Microsoft has published “What the sensitive information types look for” and here is the specific link for the SSN type.

Note: You can use PowerShell to review the Rule Pack for the built-in Sensitive Info Types and then use them to customize built-in sensitive information type using these instructions.

With EDM, a healthcare company can now securely upload a datastore containing all of its patient’s names, addresses, MRN (Medical Record Number), SSN, etc. When an internal user goes to share out a file that’s located on their OneDrive for Business (OD4B) or sends an email of a document containing patient information, the Microsoft DLP service will scan the document and it can prevent the document from being shared or emailed outside the organization. EDM ensures this by enabling the DLP service to look for specific SSN of the customers or patients instead of looking for a number that looks like an SSN.

Let’s get going with implementing EDM. For this I decided to use superheroes and their hidden identities. We all work at the Superhero Identity Protection Agency (SIPA) and at SIPA, our number one goal is the protection of the secret identity of the world’s superheroes. We have a database that contains everything you could want to know about a superhero. To create our EDM Datastore we’ll export data from the database.

Here is the CSV file that we’ll use as the basis for our EDM Datastore.

|

SRN

|

Firstname

|

Lastname

|

Nickname

|

Home

|

|

95101

|

Clark

|

Kent

|

Superman

|

Krypton

|

|

95102

|

Diana

|

Prince

|

Wonder Woman

|

Paradise Island

|

|

95103

|

Bruce

|

Banner

|

Hulk

|

Ohio

|

|

95104

|

Tony

|

Stark

|

Iron Man

|

Los Angeles

|

|

95105

|

Peter

|

Parker

|

Spiderman

|

New York

|

|

95106

|

Thor

|

Odinson

|

Thor

|

Asgard

|

|

95107

|

Natasha

|

Romanoff

|

Black Widow

|

Moscow

|

|

95108

|

Steve

|

Rogers

|

Captain America

|

New York

|

|

95109

|

Bruce

|

Wayne

|

Batman

|

Gotham

|

|

95110

|

Wade

|

Wilson

|

Deadpool

|

New York

|

|

95111

|

Arthur

|

Curry

|

Aquaman

|

Atlantis

|

|

95112

|

Barry

|

Allen

|

Flash

|

Central City

|

|

95113

|

Hal

|

Jordan

|

Green Lantern

|

Coast City

|

|

95114

|

Carol

|

Danvers

|

Captain Marvel

|

Los Angeles

|

|

95115

|

Clint

|

Barton

|

Hawkeye

|

Classified

|

|

95116

|

Bobby

|

Drake

|

Iceman

|

New York

|

|

95117

|

Scott

|

Summers

|

Cyclops

|

Alaska

|

|

95118

|

Ororo

|

Munroe

|

Storm

|

Kenya

|

|

95119

|

T’Challa

|

|

Black Panther

|

Wakanda

|

|

95120

|

James

|

Howlett

|

Wolverine

|

Canada

|

|

95121

|

Charles

|

Xavier

|

Professor X

|

New York

|

In the table above, you can see how the data looks. Notice that we have a header row. The Superhero Registration Number (SRN) is used to identify each superhero. We also exported their first and last names, Superhero (Nickname) name and their Home origin.

The documentation to create Custom Sensitive Information Types with EDM is located here. I highly recommend you reference this document as it is very informative and will be kept up to date. The first step we need to do is define the Schema for our EDM Datastore. To do this we utilize XML and the CSV file we exported from our SIPA database.

A sample Schema is in the documentation. For our Schema, we first need to determine what fields we want to be searchable. The searchable fields are the key fields that we want to utilize that are critical for identification. How you configure your Schema is up to you, but for SIPA we have determined that the SRN and Nickname fields are the fields we want to be searchable.

Note: Searchable fields should be unique to the datastore, or as unique as possible. We know SRN is never duplicated in the Superhero Database so that is why it is chosen. We also know there is only one Superman, one Black Widow, one Wolverine, etc., so that is why we choose it as a searchable field. It does not make sense, at least in this instance to use something like Firstname as a searchable field. While in the sample CSV we do not have any duplicate first names, when you begin to think about documents and artifacts being using within SIPA, someone could mention Steve and be addressing Steve Jones in Database management and not Steve Rogers, Captain America.

Now that we’ve identified the searchable fields, all we need to do is create the XML file.

Let’s go over the XML file. I highlighted the second row above as it is important. Notice the ‘DataStore name=”SIPAIdentities”’ entry, this is important as it reflects the name of the datastore it applies to. The field names were all taken from the header row of the CSV file. You can also see that I set the “SRN” and “Nickname” fields as searchable. I have named the Schema file, edm.xml.

Now that we have the Schema file ready, we need to upload it into the service. Currently this is done via PowerShell, Microsoft will be creating GUI interfaces in the near future. Here are instructions for connecting with PowerShell. If you have Multi-factor Authentication (MFA) enabled (you should ALL HAVE MULTI-FACTOR ENABLED for Admin accounts) here are the PowerShell connection instructions with MFA Enabled. By the way, I’m using a Demo tenant for this setup, and highly recommend you first test things out in a Demo Tenant prior to enabling in your production tenant. I’m also using a Global Admin account for this, you can check out the permission structure for the Security and Compliance centers here.

Connecting and uploading Schema file:

1. Launch the Microsoft Exchange Online PowerShell Module that you downloaded from the instructions above.

2. Type Connect-IPPSSession, then enter your username in the account sign in screen, click next

3. Enter your password and click Sign in

4. Enter your MFA Code – this will depend on how you have, or have not, configured MFA, click Verify

5. Now you’re connected to the Security and Compliance Center Remote PowerShell

6. Change the directory to the location you saved your edm.xml file

7. Enter the following commands to upload the Schema file:

$edmSchemaXml=Get-Content .edm.xml -Encoding Byte -ReadCount 0

New-DlpEdmSchema -FileData $edmSchemaXml -Confirm:$true

Confirm the action

8. You now have a datastore Schema uploaded and ready.

This will wrap up part 1. We now understand more about EDM and why it’s helpful. We have begun the journey to getting EDM setup and protecting those who protect us, the Superheroes! Please check in for part 2 of this journey as we will continue to learn more about EDM, DLP and the superheroes, as well and get the EDM configuration wrapped up!

![Implementing Microsoft Exact Data Match (EDM) Part 2]()

Hosted by Terranova Security and sponsored by Microsoft, keynote by Gartner.

Aware and vigilant end users are key to unlocking the full potential of your security technologies. Any part of your cybersecurity strategy that involves users—whether it’s data protection or email security—depends on your users’ security awareness, engagement, and alertness. To truly protect our organizations, we must not only empower our colleagues to protect themselves but also build an organizational culture that prioritizes security.

Security Awareness Virtual Summit

We are co-sponsoring Terranova Security’s Security Awareness Virtual Summit, coming to a laptop near you on May 5th, 2020 12:00 pm to 3:00 pm ET. Register now to hear from Gartner Senior Director, Brian Reed, Lise Lapointe CEO of Terranova Security, and @Brandon Koeller, Microsoft Security Principal PM Lead.

Ask your questions to a panel of experts, including @Blythe Price and Erin Csonaki, Program Managers for Microsoft Cyber Security Awareness and Training. Participate in a virtual workshop that will walk you through designing a custom security training program for your organization and leave armed with knowledge that will help you boost completion rates, identify the right frequency, format and length for your training, and monitor effectiveness.

Why Simulated Attack is essential to your Security Awareness Training at 12:45 pm ET

Tune in to Brandon’s session to learn how to leverage simulated attack to detect and quantify your user risk. This session gives you a sneak peek of upcoming product innovations. Simulated phishing attacks which are context-specific to your business needs help you detect and quantify user susceptibility. And targeted Terranova Security training, customized for user vulnerability level, engagement level, and context, remediates user risk. Our solution automates administration and monitoring end-to-end, simplifying processes like payload management and user targeting to a few clicks, and delivers robust analytics to enable rich reporting. Save your spot now to attend this session.

Expert Panel at 2:00 pm ET

To learn more about how we design and deploy phish simulation and training within Microsoft, and optimizing for engagement and completion, join Blythe Price and Erin Csonaki at the expert panel at 2:00 pm. They will be in conversation with Lise Lapointe, author of the book The Human Fix to Human Risk and Gartner’s Brian Reed. Blythe and Erin will share tips, best practices, and expertise gleaned from designing and managing Microsoft’s cyber security awareness program.

We hope to see many of you on May 5! Register here to learn how to maximize the impact of your security awareness program.

In this weekly discussion of latest news and topics around Microsoft 365, hosts – Vesa Juvonen (Microsoft), Waldek Mastykarz (Rencore), are joined by Albert-Jan Schot – CTO and MVP at Portiva – Utrecht, Netherlands.

The group discusses differences in development for on-prem (one framework, add boxes) vs cloud (many frameworks, throttling), for business productivity stack (integrate stuff) vs Azure (expose stuff) and for open-source projects (rinse and repeat). Unclear? Watch the episode.

Why develop for M365 as opposed to Azure? It’s not that you choose one or the other. Both so intertwined and converging in a cloud first world. Azure (tools/services) supports so much that we just do AI, Infrastructure, Security, Identity in the process of delivering business solutions. M365 is like an OS providing many services. Our focus is integrating and building on top of M365 – within feature rich environments – Teams, Outlook, SharePoint where our customers conduct their daily business.

This episode was recorded on Tuesday, April 14, 2020.

Got feedback, ideas, other input – please do let us know!